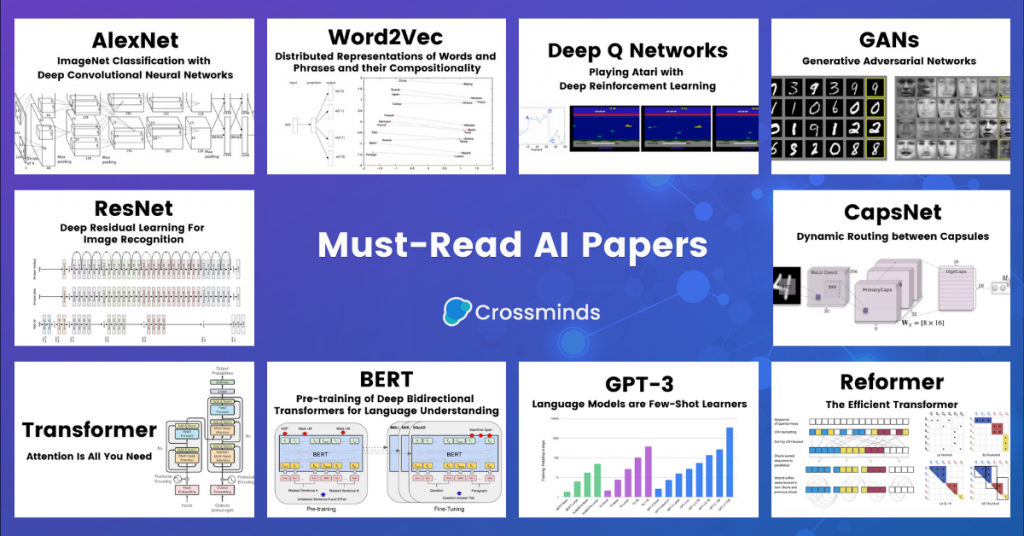

From AlexNet to GPT-3, we curate a list of 10 papers that mark significant research advancements in machine learning, deep learning, computer vision, NLP, and reinforcement learning over the past 10 years. Author presentation and detailed paper reviews are also included.

We have put together a list of 10 most cited and discussed research papers in machine learning that published over the past 10 years, from AlexNet to GPT-3. These are great readings for researchers new to this field and freshers for experienced researchers. For each paper, we provide links to the short overview, author presentations and detailed paper walkthrough for readers with different levels of expertise.

1. ImageNet Classification with Deep Convolutional Neural Networks (AlexNet)

Authors: Alex Krizhevsky, Ilya Sutskever, Geoffrey E. Hinton (University of Toronto)

Published in 2012 (NIPS 2012)

Abstract:

We trained a large, deep convolutional neural network to classify the 1.2 million high-resolution images in the ImageNet LSVRC-2010 contest into the 1000 different classes. On the test data, we achieved top-1 and top-5 error rates of 37.5% and 17.0% which is considerably better than the previous state-of-the-art. The neural network, which has 60 million parameters and 650,000 neurons, consists of five convolutional layers, some of which are followed by max-pooling layers, and three fully-connected layers with a final 1000-way softmax. To make training faster, we used non-saturating neurons and a very efficient GPU implementation of the convolution operation. To reduce overfitting in the fully-connected layers we employed a recently-developed regularization method called “dropout” that proved to be very effective. We also entered a variant of this model in the ILSVRC-2012 competition and achieved a winning top-5 test error rate of 15.3%, compared to 26.2% achieved by the second-best entry.

[Paper]

Watch the paper explanatory video: